Topics

August 11, 2022

.avif)

August 11, 2022

.avif)

The best insights come from gathering a variety of data. It’s important to see and quantify what people do, and it’s just as important to know why people do what they do.

Data without context only tells part of the story. Triangulating multiple data sources often gets us closer to understanding the context around decision making, how folks use products, and how they feel about them.

I’m in favor of mixed methods, but I often blend methods too. Research sessions don’t have to be just interviews or just usability testing. A survey doesn’t have to be something that is sent out into the ether that you hope someone responds to.

Two methods I’ve often combined are surveys and 1:1 interviews. Two approaches I have often used to blend these methods are what I call moderated surveys and generative interview guides. Each approach takes the best parts of survey and interview methods, and combines them for flexible but grounded data collection.

If you’re conducting a study in which you know who your participants are, you may be able to conduct moderated surveys, which allow you to view responses as they’re submitted. This approach combines the consistency of a survey with the personal and adaptable nature of an interview.

Technically, it also involves observation, and can be used in concert with other tools such as eye tracking, telemetry, or mid-task feedback measures. Moderated surveys are great to use when you know you want to conduct a survey, and you’re able to review responses in real time to follow up with participants.

I’ve used this method often while conducting video game playtests. Surveys allow players to remain relatively autonomous during the test, interrupted by the moderator as little as possible. It’s a great way to maintain consistency of questions answered among participants, and allows each of them to play at their own pace, taking each survey at its designated spot in the game flow.

The follow-up questions that come from reviewing the survey responses are then asked at a time that makes sense for each participant, making sure not to interrupt them during a critical gameplay moment.

You’ve set up a test session with your participants either remotely or in person. You set all participants up with the content they’ll need to review. You observe their behavior as they complete the assigned task.

You make note of any behaviors you’re particularly interested in. This may include counts or yes/no coding of specific behaviors. If you’re lucky, telemetry does this for you. You have participants answer one more more surveys. You might have participants answer a set of questions after onboarding into a new experience, another set of questions in the middle of the experience, and a final set of questions at the end.

As folks fill out their surveys—which include a mix of ratings, rankings, single choice, multiple select, and open ended questions—you watch responses as they are submitted. You notice aspects of some of the responses that make you curious to know more. You follow up with those participants about their answers either in person or through voice or text channels. This survey has turned into an interview.

You have data from all participants that is consistent, and you have deeper context for any answers that weren’t clear or begged for further inquiry. Participants answered questions about the experience as close to the experience as possible, with minimal interruption.

You have efficiently gathered quantitative and qualitative behavioral and attitudinal data from multiple individuals at the same time. You combine data sources to see what participants did and how they perceived the experience.

This approach positions the interview from the participant point of view, using their own words. Generative interview guides are a good choice when you know you want to conduct an interview and gather rich qualitative data about user attitudes—and you want to focus on the participant’s point of view as much as possible.

Let’s say you’re working with instructional designers who are interested in how app users respond to educational characters in an onboarding experience. Is the tone coming across as intended for each character? Is each character understandable, believable, interesting? Are there any red flags we should be aware of?

For example...

You conduct interviews after participants experience a chunk of low fidelity content involving three main characters. The content involves character art, voiceovers, and instructional directives.

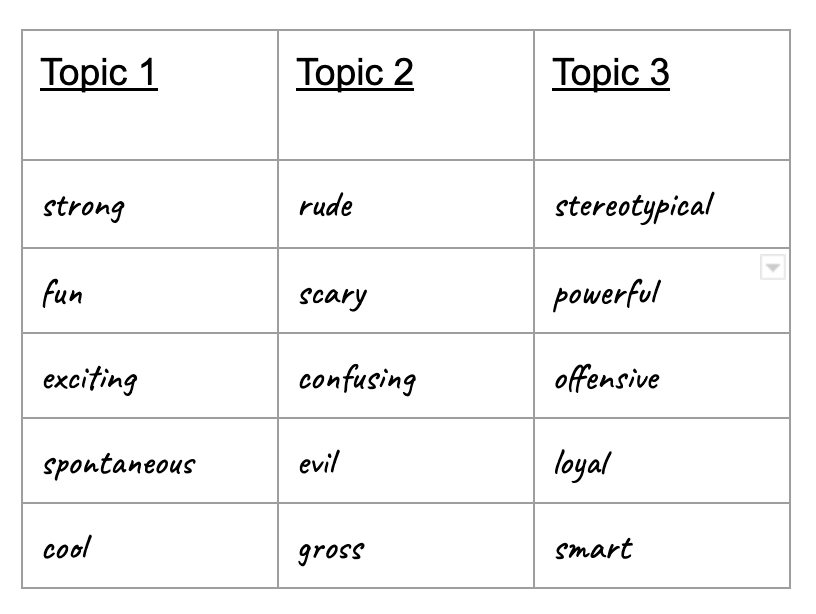

In addition to assessing understanding of the onboarding experience, you give participants a piece of paper with three columns, each representing one of the characters. You ask participants to write 3-5 adjectives to describe each character. These responses serve as a springboard for the interviews. You record the conversations and allow each participant to guide you through their experience of each character.

The tangible artifact of the paper you have participants fill out gives the designers a way to gauge user perceptions of these characters against their own intentions and expectations. The synthesized interview data offers greater context.

You find that one of the characters was perceived as much more objectionable than intended, and designers make major changes to the tone for that character.

Another character was perceived as having some stereotypical attributes which you are able to address thanks to this feedback. The third character was overall highly appreciated, and specific called out characteristics are highlighted further.

The commenting and messaging features on Dscout allow you to easily use a blended strategy. Here are some examples of how you might use these approaches on Dscout:

One way to do this is to set up a Diary mission. Here you review entries as they come in. Some of the responses leave you wanting to know more. You can follow up with those participants about their answers by using comments or messages.

You can also comment on a specific question. For example, “Hi! I’m interested to know more about what you said here. Do you find this is often the case?” If you have a more general question that is not tied to a specific entry or answer, you can message scouts either in bulk or one at a time.

Another option is to set up a Live session to dig even deeper if you have the time to conduct 1:1 interviews. If you want to use a version of the generative interview guide, you can include it as one or more questions in your Diary mission.

Even if you don’t use the adjective method, responses to Diary questions can still serve as a springboard for your Live session. For example, if you’re assessing reactions from new users to a VR experience, their ratings or open-ended responses to your Diary questions can serve as starting points for your interviews.

Express is another tool you can use to gather a large amount of data on a topic, then invite a subgroup of those participants to in-depth interviews later to gather richer qualitative data, using answers from the Express mission as your interview guide.

These approaches are intentionally flexible. The main idea is that surveys can be used as interview guides, and interviews can be approached somewhat like surveys. Experiment with blending methods together to see what works best for your research goals.

Approaching research problems creatively might lead you toward a mix of some other methods that work well. I would love to hear about what blend of methods works (or doesn't work) for you!

Laurel Brown has been a mixed methods UX researcher since 2017, specializing in games and global research. She lives on a farm where she grows tomatoes and makes art.